In this blog, we will setup the Continuous Deployment to Lambda function using Bitbucket pipeline

CI/CD Flow:

- Docker image

- We are using caches feature of bitbucket pipeline. We are doing custom caching for node_modules folder

- Run the npm install command

- Creating a zip file

- Upload zip file to S3 bucket

- Update the lambda function that will take the new artifacts from S3 buckets

Prerequisite:

- S3 bucket on AWS

- Lambda function

- IAM Role

- bitbucket-pipeline.yaml file

- AWS Secret Keys

- Environment Variables

How to setup a Continuous Deployment to Lambda function using Bitbucket pipeline

- Create a bitbucket-pipeline.yaml file at the root of your project

- For the specific bitbucket repository -> Go to Settings -> Add Repository variables

AWS_SECRET_ACCESS_KEYAWS_LAMBDA_FUNCTION_NAME

AWS_ACCESS_KEY_ID

AWS_REGION

AWS_ROLE

All the above variables are required to connect to s3 bucket and the lambda function from bitbucket pipeline.

3. We are setting up a Continuous Deployment to Lambda function using Bitbucket pipeline for nodejs application

image: lambci/lambda:build-nodejs6.10

pipelines:

default:

- step:

deployment: production

script:

- npm install

- npm install node-lambda -g

- zip test.zip ./node_modules index.js test.js

- aws s3 cp test.zip s3://test-bucket-carnival

- aws lambda update-function-code --function-name lambdatest --s3-bucket test-bucket-carnival --s3-key test.zip

#- node-lambda deploy -a $AWS_ACCESS_KEY_ID -s $AWS_SECRET_ACCESS_KEY -o $AWS_ROLE -r $AWS_REGION -n $AWS_LAMBDA_FUNCTION_NAME --excludeGlobs "bitbucket-pipelines.yml"

caches:

- node

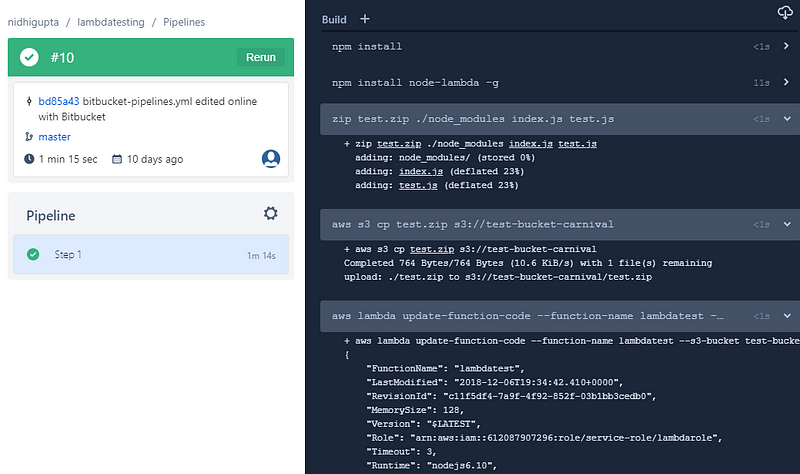

Logs of a bitbucket-pipeline for lambda function

Bitbucket Pipeline for Lambda function

Congratulations, we have successfully setup the Continuous Deployment to Lambda function using Bitbucket pipeline