We have seen in my previous blog how we can access the application externally using Service object. We have two options to get traffic outside the cluster

- NodePort

- LoadBalancer

Mostly the load balancer option is preferred in the public cloud providers.

Checkout my Youtube video for this article

Limitations:

- The problem is that one LoadBalancer can only point towards a single Kubernetes service object.

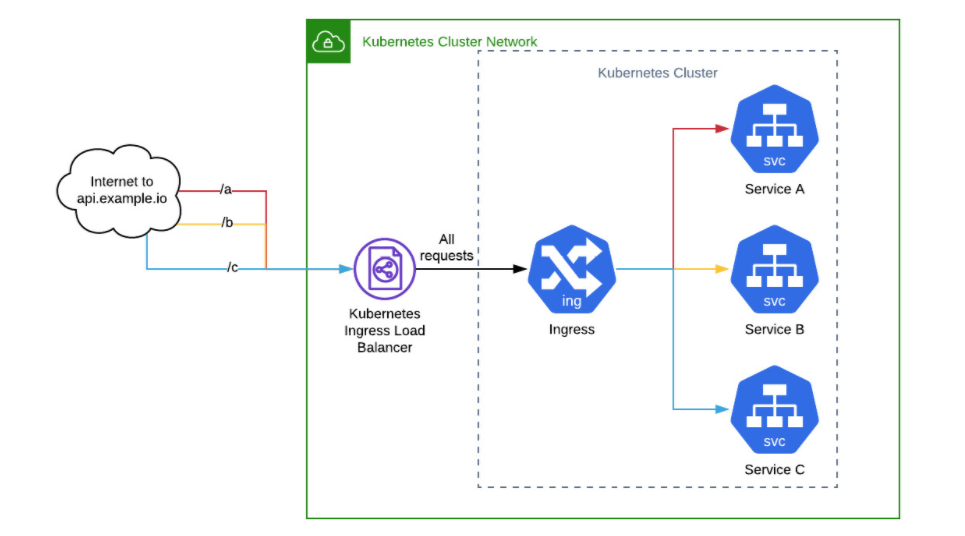

So now if you have 100 microservices -> you need 100 load balancers -> very expensive

2. Let’s suppose that you have a web service running at test.com and you want test.com/users to go to one microservice and test.com/notifications to go to another completely separate microservice. Before the arrival of Ingress, you would need to set up your own Kubernetes Service and do the internal path resolution to your app.

The above issue is resolved using Kubernetes Ingress resource.

What is Ingress ?

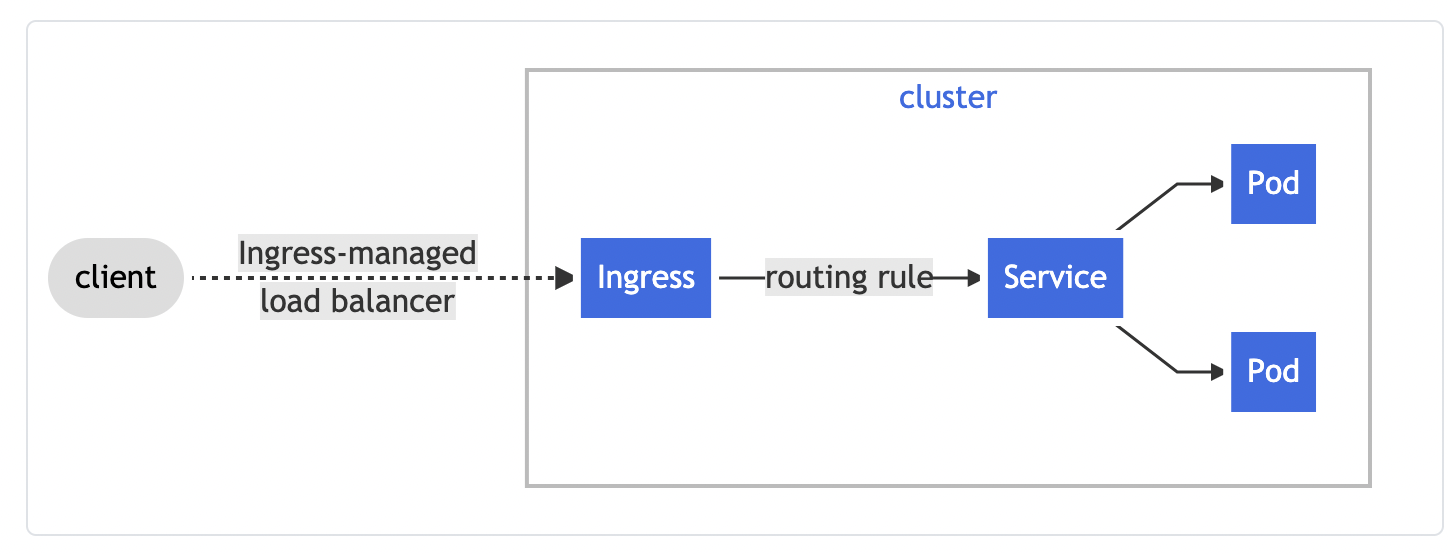

An API object that manages external access to the services in a cluster, typically HTTP.

It also provides

- load balancing

- SSL termination

- name-based virtual hosting

Simple example where ingress sending all traffic to one service

Ingress Controller is responsible for fulfilling the ingress

What is Ingress Controller

In order for the Ingress resource to work, the cluster must have an ingress controller running. There are lot of ingress controllers available, you can find those details from their official documentation.

Here, we will be using nginx ingress controller and do the deployment on GKE as per their document

Prerequisite:

- Functional Kubernetes Cluster configured

- Clone this git repo

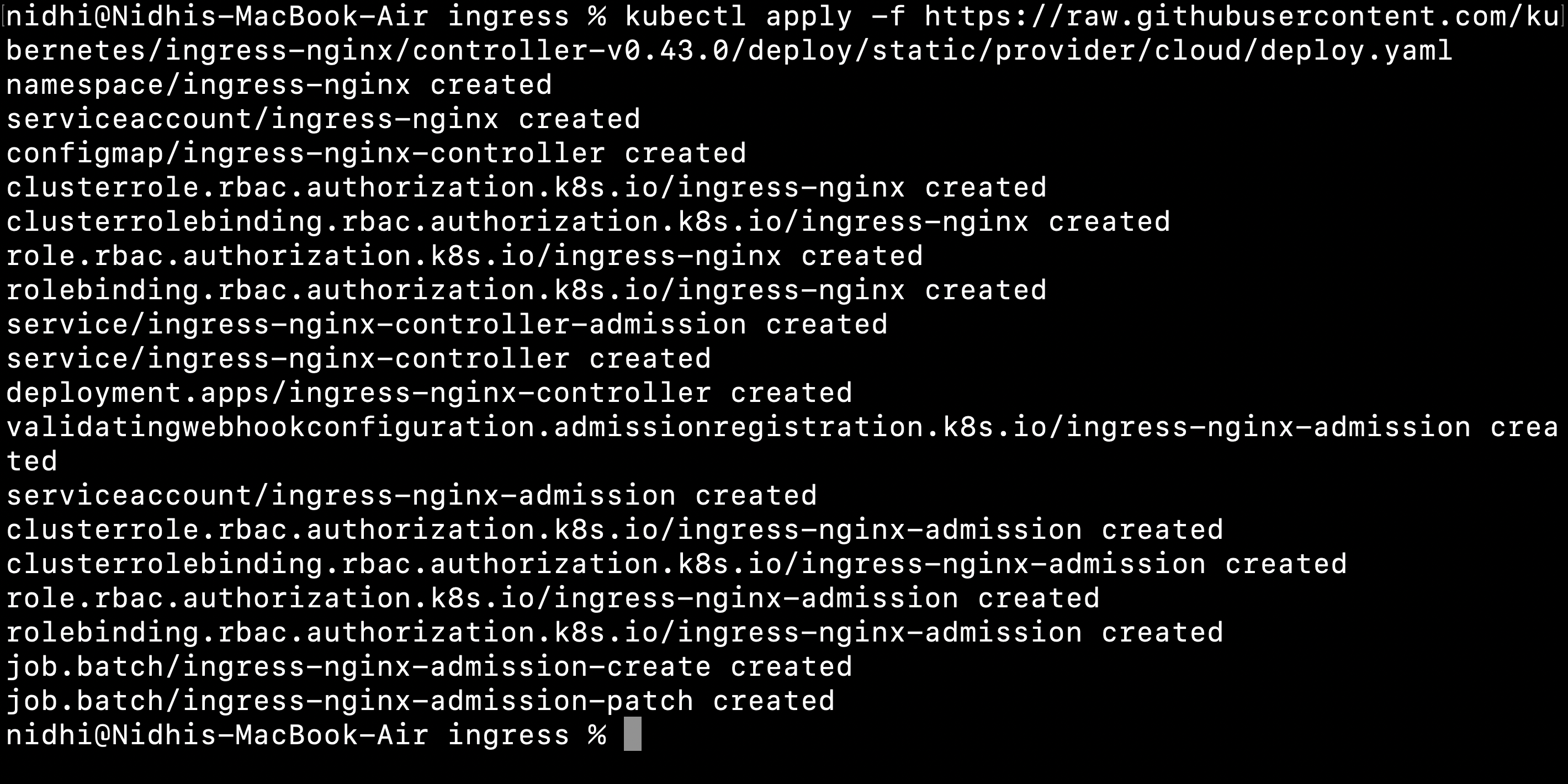

Create an ingress controller

On GKE, we will run the below command which will create an ingress controller on our cluster

kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v0.43.0/deploy/static/provider/cloud/deploy.yaml

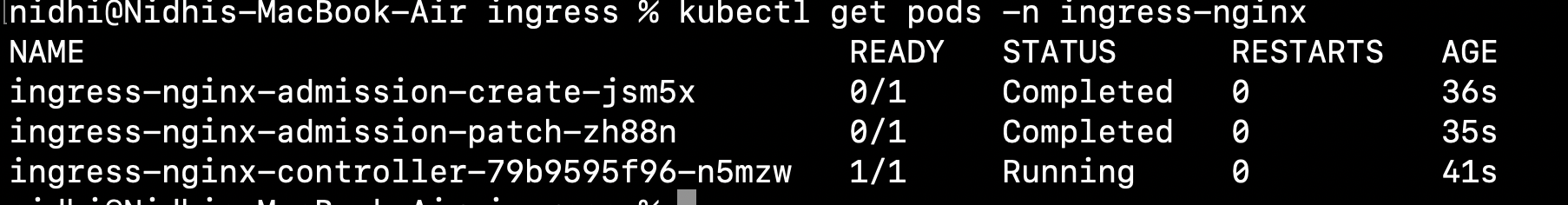

kubectl get pods -n ingress-nginx

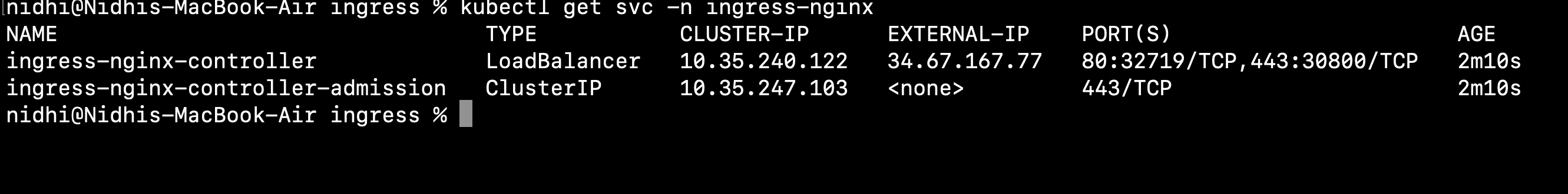

kubectl get svc -n ingress-nginx

We can see two services are running for the nginx-controller

Basic Example of using Kubernetes Ingress Resource

Now we will run a sample application configured in this yaml file and expose it externally using Kubernetes Ingress Resource

apiVersion: apps/v1

kind: Deployment

metadata:

name: web

namespace: default

spec:

selector:

matchLabels:

run: web

template:

metadata:

labels:

run: web

spec:

containers:

- image: gcr.io/google-samples/hello-app:1.0

imagePullPolicy: IfNotPresent

name: web

ports:

- containerPort: 8080

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: web

namespace: default

spec:

ports:

- port: 8080

protocol: TCP

targetPort: 8080

selector:

run: web

type: NodePort

Run the below command

cd ingress/basic-example

kubectl apply -f web.yaml

Now we will deploy an ingress resource

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: basic-ingress

spec:

backend:

serviceName: web

servicePort: 8080

Run the below command

kubectl apply -f basic-ingress.yaml

kubectl get ingress

Run the command again if IP didn’t come

Note: It might take a few minutes for GKE to allocate an external IP address and set up forwarding rules before the load balancer is ready to serve your application. You might get errors such as HTTP 404 or HTTP 500 until the load balancer configuration is propagated across the globe.

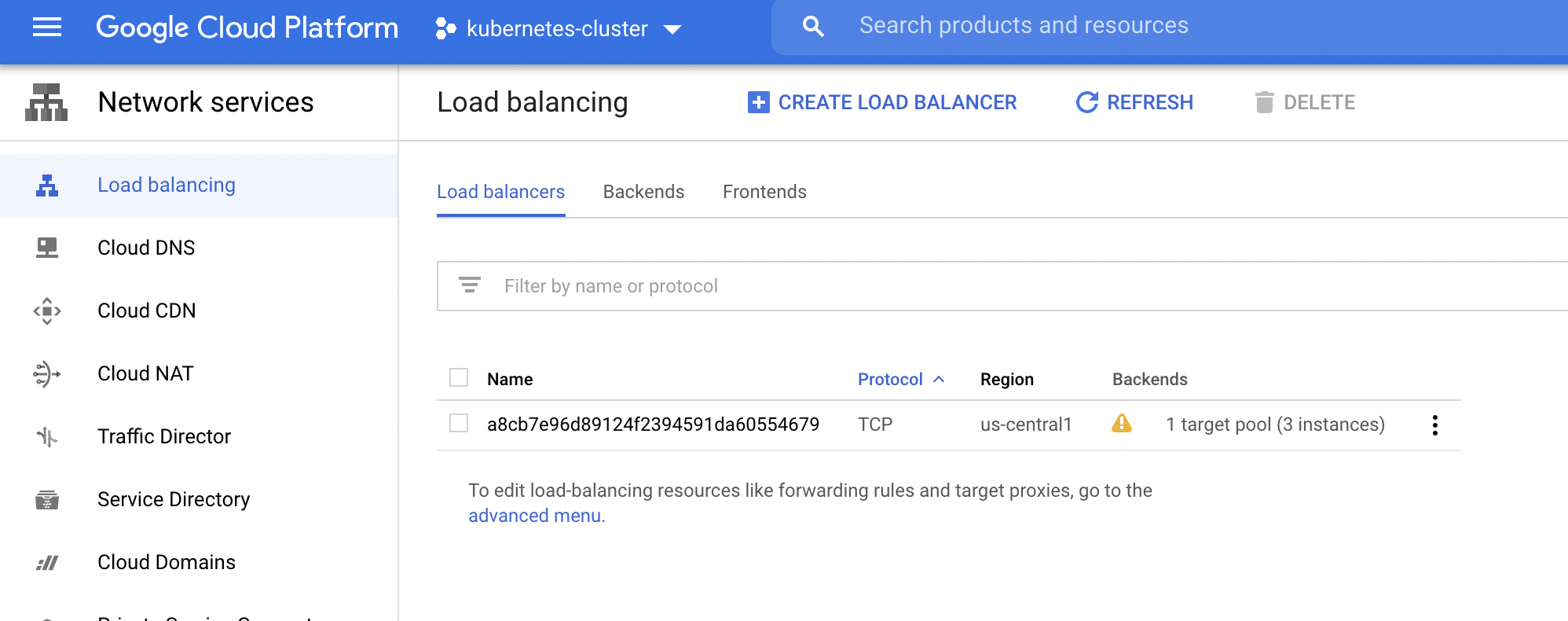

You will see in your google account that one load balancer is getting created

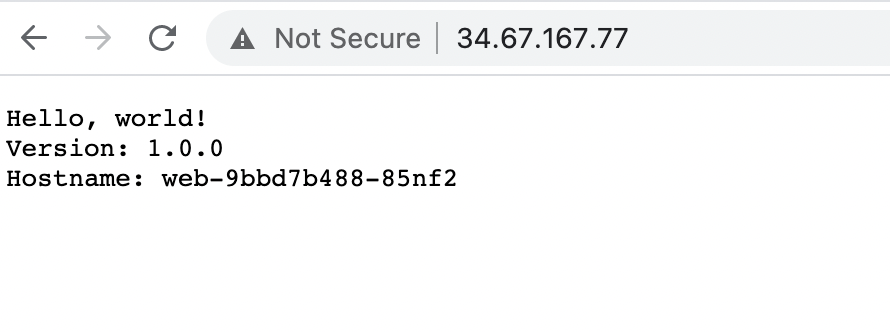

Now you can open this IP on the browser

In this example, no host is specified, so the rule applies to all inbound HTTP traffic through the IP address specified. If a host is provided (for example, test.com), the rules apply to that host.

Simple Fanout

A fanout configuration routes traffic from a single IP address to more than one Service, based on the HTTP URI being requested.

This yaml file will deploy 2 deployments( web and web1) and 2 services ( web and web1)

apiVersion: apps/v1

kind: Deployment

metadata:

name: web

namespace: default

spec:

selector:

matchLabels:

run: web

template:

metadata:

labels:

run: web

spec:

containers:

- image: gcr.io/google-samples/hello-app:1.0

imagePullPolicy: IfNotPresent

name: web

ports:

- containerPort: 8080

protocol: TCP

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: web2

namespace: default

spec:

selector:

matchLabels:

run: web2

template:

metadata:

labels:

run: web2

spec:

containers:

- image: gcr.io/google-samples/hello-app:2.0

imagePullPolicy: IfNotPresent

name: web2

ports:

- containerPort: 8080

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: web

namespace: default

spec:

ports:

- port: 8080

protocol: TCP

targetPort: 8080

selector:

run: web

type: NodePort---

apiVersion: v1

kind: Service

metadata:

name: web2

namespace: default

spec:

ports:

- port: 8080

protocol: TCP

targetPort: 8080

selector:

run: web2

type: NodePort

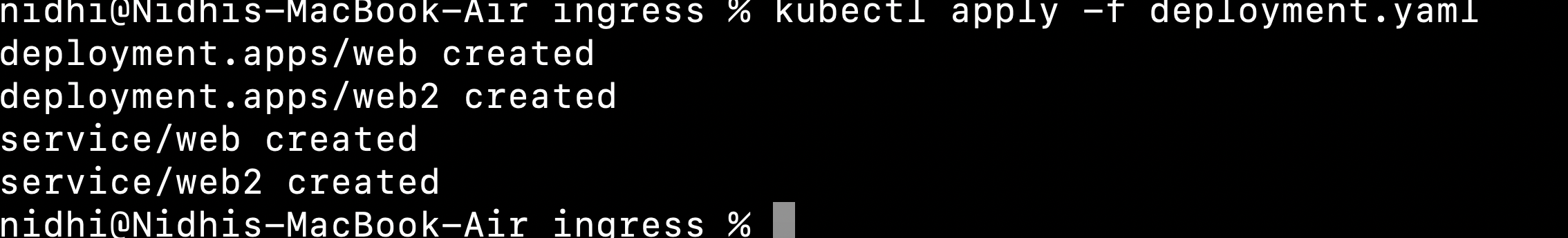

Run the below command

cd ingress/fanout-example

kubectl apply -f web.yaml

Now we will create an fanout ingress configuration

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: fanout-ingress

spec:

rules:

- http:

paths:

- path: /v1/*

backend:

serviceName: web

servicePort: 8080

- path: /v2/*

backend:

serviceName: web2

servicePort: 8080

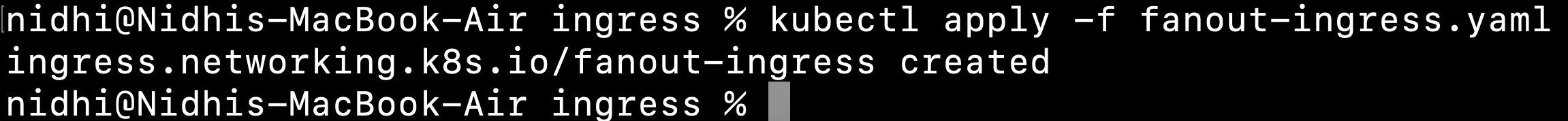

Run the command

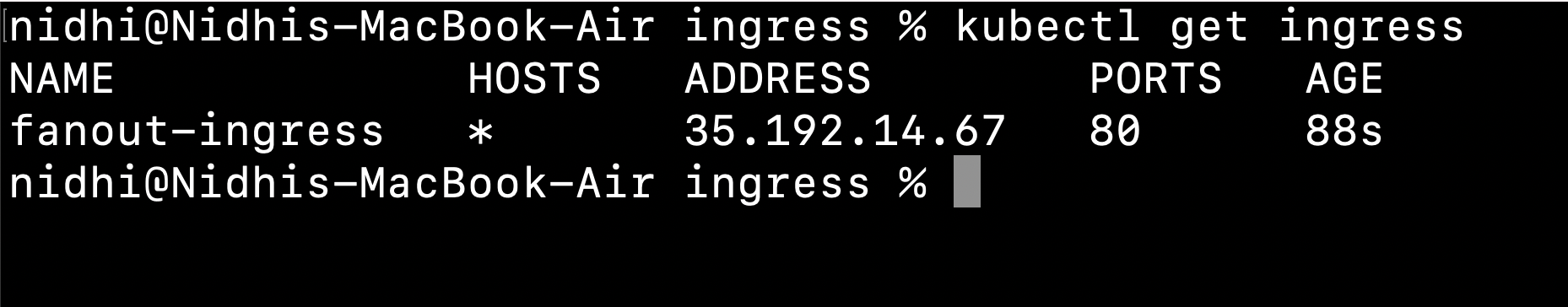

kubectl apply -f fanout-ingress.yaml

Name-Based hosting

For name based hosting will be providing the hostname configuration in our yaml file

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: host-ingress

spec:

rules:

- host: "test.com"

http:

paths:

- path: /test

backend:

serviceName: web

servicePort: 8080

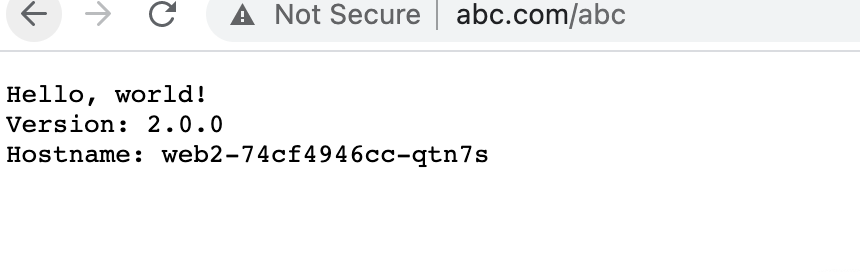

- host: "abc.com"

http:

paths:

- path: /abc

backend:

serviceName: web2

servicePort: 8080

Run the below command

cd ingress/name-host

kubectl apply -f web.yaml

kubectl apply -f host-ingress.yaml

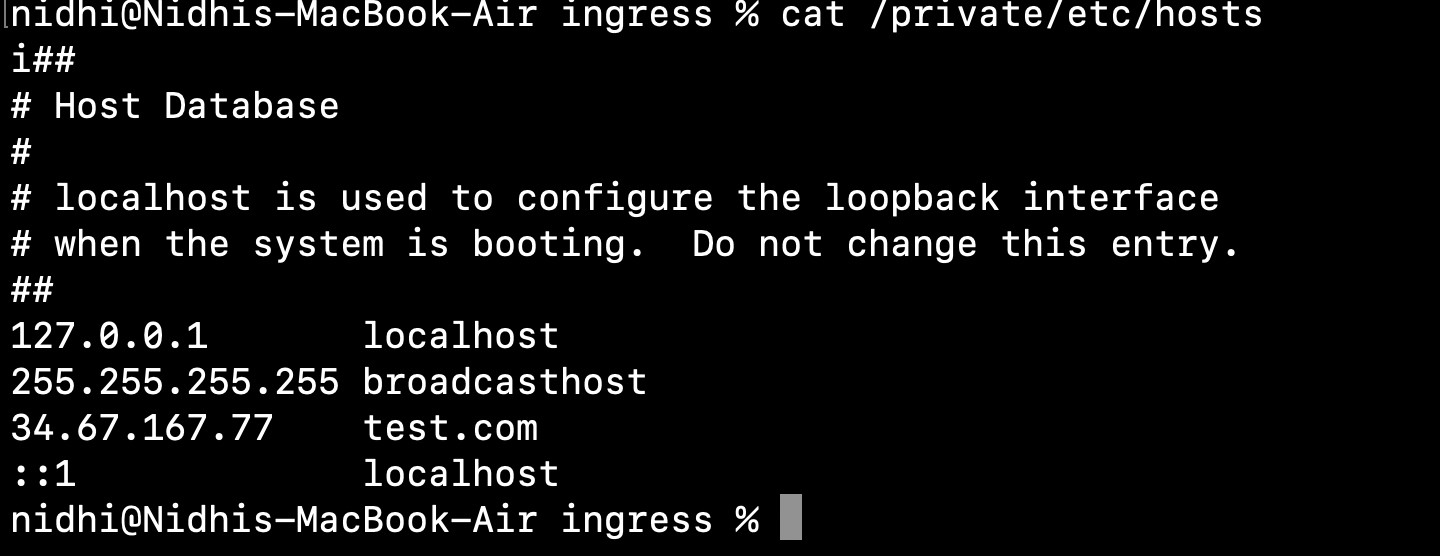

To access this url, edit /etc/hosts file on Mac

Add a line

IP of ingress test.com

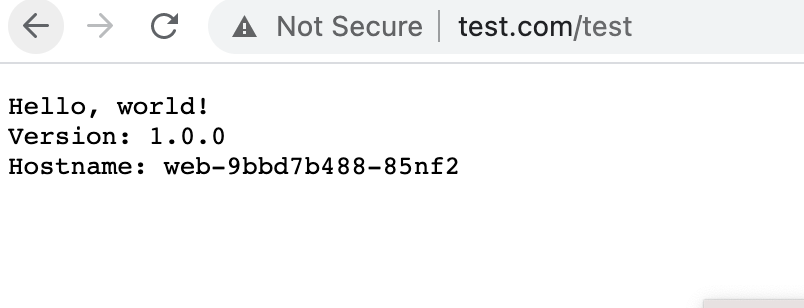

Now try to access the browser as shown below:

References